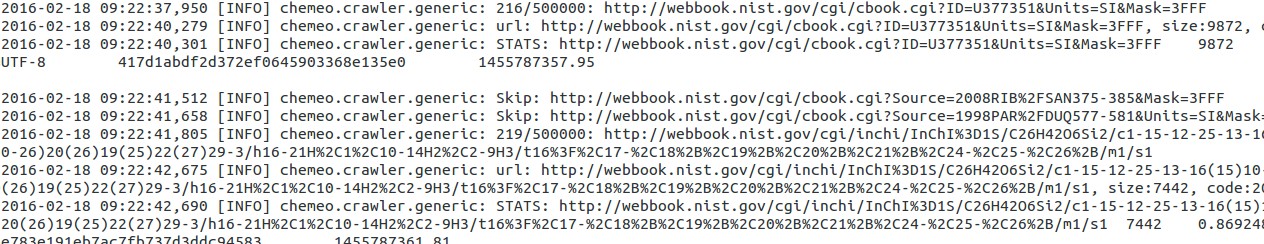

Good feeling, the Cheméo crawler is now crawling again the web. The crawls are still performed in batchs, that is, the crawler is only launched every few months, not like Google which is accessing the websites every day.

This time, the crawler has been improved to discard non interesting pages before crawling and rewriting the URL to avoid duplicating the crawl. For example the two URLs:

http://my-website.com/index.php?foo=bar&bing=bonghttp://my-website.com/index.php?bing=bong&foo=bar

are the same, because the query string bing=bong&foo=bar passes two

keys bing and foo with values bong and bar. By rewriting the

URLs before the crawl, the system avoids duplicate crawling. Both the

crawler and the targeted website are now doing less work.

The crawler is alse using bloom filters to efficiently discard already crawled links and bad links and the best part of it is the storage of the retrieved data in a WARC file.

A WARC file, the Web ARChive file format, is a standard to preserve the content published online. It is a well though ISO standard with a pretty large number of tools available to manipulate them. The advantage for the crawler is that many versions of the same page can be stored, it is compressed and can be efficiently manipulated after the creation. This way, the parsing of the pages is performed in total independence of the crawling.

The current crawling session is expected to last approximately a month.

Update, 23rd of February: nearly 200,000 URLs crawled in 5 days, with 1.2 millions to crawl, the one month crawl is on track.